I've recently been working on some quality of life updates for the Drupalize.Me codebase and various DevOps things. My goal is to pay down some of our technical debt, and generally make doing development on the site a more pleasant experience. In doing so I noticed that I was spending a lot of time waiting for MySQL imports -- that means others probably were too. I decided to see what I could do about it, and here's what I learned.

That MySQL table is HUGE!

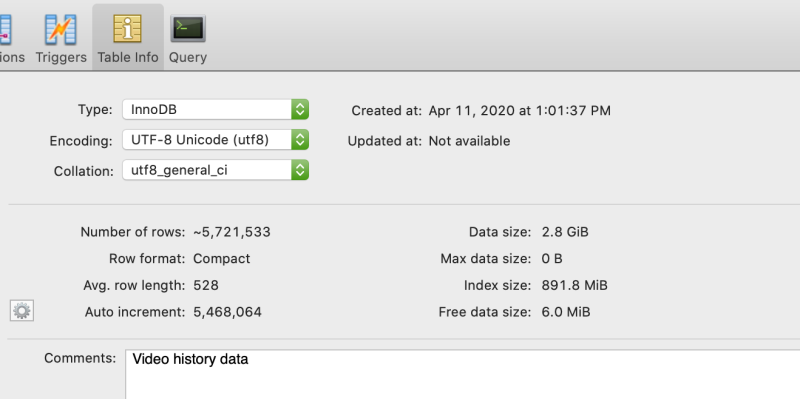

The database for the site has a table named vidhist. That's short for video history. It has a record in it for every instance someone interacts with one of the videos on our site. We use the data to do things like show you which videos you've watched, show you a history of your usage over time, and to resume where you left off last time you played a specific video. Over time, this table has grown... and grown... and grown. As of this morning, there are a little over 5.7 million records in the table. And it's 2.8GiB in size!

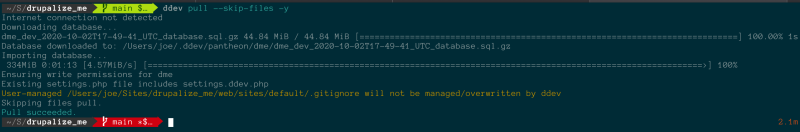

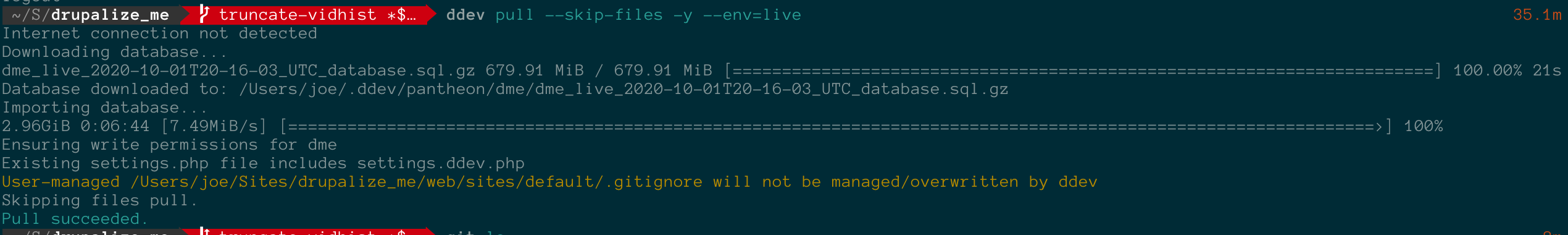

That is all just fine -- except when you need to downsync a copy of the database to your localhost for development. Downloading the backup can take a while. The file is about 650Mb when compressed. Just importing all those records takes forever. I use DDEV-local for development. Pulling a copy of the database from Pantheon and importing it takes about 30 minutes! It's not as bad when exporting/importing locally. But you can imagine how working on testing an update hook could get quite tedious.

I usually truncate the vidhist table on my localhost just so I don't have to deal with it. The data is important in production and for historical records, but is rarely necessary in development environments.

To get an idea of the impact this has, here are some of the other places this table can cause issues:

- Downsync to local environment takes about 30 minutes

- Cloning a database from one environment to another in Pantheon is slow; I don't have great metrics on this other than just watching the spinner for what seems like forever.

- We use Tugboat.qa for automatic preview environments for every pull request. It's already quite fast to build a new preview despite the large DB size (about 2 minutes), but each preview instance is using about 8Gb of space, so we're often running into our limit. When the script runs to update our base preview image every night it takes about 18-20 minutes.

- We occasionally use Pantheon's multidev feature, and building those environments takes just as long as the

ddev pullcommand due in part to the large table. - We have an integration tests suite that takes about 11 minutes to run. Combined with the need to build a testing environment, it can take over 40 minutes for our tests to run.

Most of this is just robots doing work in the background -- so it's pretty easy to look the other way. But, there's a few places where this is really affecting productivity. So, I made a few changes that'll hopefully help in the future.

Clean up the database on Pantheon

Using Pantheon's Quicksilver hooks we execute the following script anytime the database is cloned from the live environment to any other environment:

<?php /**

* @file

* Remove most of the records from the vidhist table to keep the size down.

*/

if (defined('PANTHEON_ENVIRONMENT') && (PANTHEON_ENVIRONMENT !== 'live')) {

echo "Downsize the vidhist database...\n";

define('DRUPAL_ROOT', $_SERVER['DOCUMENT_ROOT']);

require_once DRUPAL_ROOT . '/includes/bootstrap.inc';

drupal_bootstrap(DRUPAL_BOOTSTRAP_DATABASE);

db_query('CREATE TABLE vidhist_backup AS SELECT * FROM vidhist WHERE updated > UNIX_TIMESTAMP(DATE_SUB(NOW(), INTERVAL 1 MONTH))');

db_query('TRUNCATE vidhist');

db_query('LOCK TABLE vidhist WRITE, vidhist_backup WRITE');

db_query('INSERT INTO vidhist SELECT * FROM vidhist_backup');

db_query('UNLOCK TABLES');

db_query('DROP TABLE vidhist_backup');

echo "Database downsize complete.\n";

}

This code is executed by Pantheon (see configuration file below) whenever the database is cloned and goes through these steps:

- Verify that this is NOT the production environment

- Bootstrap Drupal enough so that we can query the database

- Run a series of queries that removes all but the last month's worth of data from the vidhist table. If you're curious, we run multiple queries like this instead of a single

DELETE WHERE ...query becauseTRUNCATEis significantly faster. So we first create a temp table, then copy some data into it, then truncate the vidhist table, copy the temp data back into it, and delete the temp table.

We have a pantheon.yml similar to this which tells Pantheon where to find the script and when to run it.

# Pantheon config file.

api_version: 1

workflows:

clone_database:

after:

- type: webphp

description: Reduce size of the vidhist table in the DB.

script: private/scripts/quicksilver/truncate_vidhist.php

create_cloud_development_environment:

after:

- type: webphp

description: Reduce size of the vidhist table in the DB.

script: private/scripts/quicksilver/truncate_vidhist.php

As a result, the vidhist table on all non-production environments is a fraction of the original size. It's a relatively small change to make, but the impacts are huge.

Clone operations from non-production environments are significantly faster. And, since we configure DDEV to pull from the Pantheon dev environment by default when I do ddev pull on my local it's also much faster now. It's closer to 2 minutes instead of 30!

This also helps reduce our disk usage on Tugboat.qa. Because we have Tugboat configured to pull the database and files from the Pantheon test environment, it too gets a smaller vidhist table. Our build time for previews is almost a full minute faster with previews now building in an average of 1 minute 11 seconds!

Tip: You can use this same technique to do things like sanitize sensitive data in your database so that it doesn't get copied to development environments.

An aside about Tugboat

I originally updated our Tugboat config.yml file to perform this cleanup of the database after pulling it down from Pantheon in an attempt to use less resources there. I later added the script for cleaning up the Pantheon DB above. It looked like this:

update:

# Use the tugboat-specific Drupal settings

- cp "${TUGBOAT_ROOT}/.tugboat/settings.local.php" "${DOCROOT}/sites/default/"

- cp "${TUGBOAT_ROOT}/docroot/sites/default/default.settings_overrides.inc" "${DOCROOT}/sites/default/settings_overrides.inc"

# Generate a unique hash_salt to secure the site

- echo "\$settings['hash_salt'] = '$(openssl rand -hex 32)';" >> "${DOCROOT}/sites/default/settings.local.php"

# Import and sanitize a database backup from Pantheon

- terminus backup:get ${PANTHEON_SOURCE_SITE}.${PANTHEON_SOURCE_ENVIRONMENT} --to=/tmp/database.sql.gz --element=db

- drush -r "${DOCROOT}" sql-drop -y

- zcat /tmp/database.sql.gz | drush -r "${DOCROOT}" sql-cli

- rm /tmp/database.sql.gz

# Remove most of the records from the vidhist table.

- drush -r "${DOCROOT}" sql-query "CREATE TABLE vidhist_backup AS SELECT * FROM vidhist WHERE updated > UNIX_TIMESTAMP(DATE_SUB(NOW(), INTERVAL 1 MONTH));"

- drush -r "${DOCROOT}" sql-query "TRUNCATE vidhist;"

- drush -r "${DOCROOT}" sql-query "LOCK TABLE vidhist WRITE, vidhist_backup WRITE;"

- drush -r "${DOCROOT}" sql-query "INSERT INTO vidhist SELECT * FROM vidhist_backup;"

- drush -r "${DOCROOT}" sql-query "UNLOCK TABLES;"

- drush -r "${DOCROOT}" sql-query "DROP TABLE vidhist_backup;"

But, while writing this blog post I realized that's probably not necessary. Since Tugboat is pulling the database from the Pantheon test environment, not the live one, the table will have already been cleaned up. Which, also means updating the base preview in Tugboat is going to be significantly faster than I had originally thought. Just gotta go open a new PR...

Recap

I'm kind of embarrassed that it took me this long to address this issue. It's easy to say, "Meh, it's just a few minutes." But over time those minutes can really add up. Not to mention how frustrating it must be for someone trying to get started working on the site who isn't accustomed to going to make a cup of coffee while they wait for the DB to import.

I encourage you to occasionally step back and consider the everyday motions you go through without really thinking about them. There may be room for improvement.?>

Comments

Smart thoughts here. I need to dig into our tables to see where the fat and grizzle are.

A good place to start, even if you're not trimming content tables, is cache tables and anything else that contains ephemeral data that can be regenerated as needed. If you use Drush you can tell it to export only the structure of specific tables using the

--structure-tables-listflag of the https://drushcommands.com/drush-8x/sql/sql-dump/ command.And, the https://drushcommands.com/drush-8x/sql/sql-sanitize/ command is another useful one that will cleanup passwords/emails of users to help protect PII.

Great idea for dealing with moving big databases to different environments! Curious why you LOCK TABLES when adding the records back to the vidhist table.

My original thinking was that there is code on the site that can update existing records. For example, when someone is watching a video we update their record for that video every 30 seconds. And just keep adjusting the "last known position" rather than creating new records.

However, as I'm typing this I realize that it's probably not necessary in this case because by design those SQL statements are never going to run in an environment where that data consistency really matters. Or for that matter where someone might be interacting with the application at the same time. So they could be removed. Thanks for pointing that out.

I export my db with backup_migrate and would set your vidhist table to "export as empty table". Comfortable - and as I remember - it has drush commands for this to use in some workflow.

Yes, this is a great approach too. In our example we need to keep some of the records, but not all of them. However, we use a similar "export as empty table" option for things like all the cache_* and sessions tables.

Add new comment