We recently started using Vale to help automate the tedious task of enforcing our style guide. Doing so has helped make reviews faster, and reduced any hard feelings between us. Emotions can run high when you feel someone is being overly scrupulous in their review of something you’ve worked really hard to create.

Everything gets reviewed

Every content item we publish goes through a rigorous review process to ensure we're always putting our best foot forward. This review consists of a number of different steps:

- Technical review: Is the content technically correct? Do all the code samples work?

- Copy editing: Does it meet our style guide? Does it use Chicago Manual of Style formatting guidelines? Does it use proper grammar, spelling, etc.?

- Check for broken links and images

- Apply consistent Markdown formatting

Some of these things are objective. For example we always use Drupal, never drupal. We always use italics for filenames and paths. And we always format lists in Markdown using a - followed by a single space, never a *. These are things that are simply not up for debate. You either did it right or you didn't. Most tutorials have at least a handful of these fixes that need to be made.

Other style guidelines are more subjective. For example, we try to not use passive voice, but there are exceptions. A technical review might point out multiple ways of accomplishing the same task, and we'll generally only cover one. Avoid clichés. Don't use superlatives and hyperbole. A single tutorial usually has 10+ of these suggestions. These are by far the more important things to focus on in the review as they can have a real impact on the usefulness of the content.

> No one wants to be the jerk who points out dozens of formatting errors. And no one enjoys having their work nit-picked by their peers.

We've been talking for a long time about the utility of a tool to help with automating some of the steps in the review process — specifically, the objective ones. Similarly, Drupal developers use PHPCS to ensure their PHP code follows the Drupal coding standards, and JavaScript developers use Prettier to ensure consistent formatting.

Without a tool, we spend a lot of time in the review process commenting on, and fixing, non-substantive things. That’s a distraction from the more important work of providing a critique of the content itself.

Let the robots do the nit-picking

Amber recently introduced me to Vale, a tool she learned about while attending the Write the Docs conference in Portland. We've since introduced it into our review workflow, and are loving it, along with remark for linting Markdown formatting.

Side note: Check out this lightning talk from the conference. It's not Vale, but gives a great overview of the types of things we're doing.

While there are numerous other tools we evaluated, in the end we choose Vale. We've found that it's easier for non-technical users to configure and it allows us to differentiate between objective and subjective suggestions through the use of different error levels.

YAML configuration files

When using Vale you implement your styles as YAML files.

Example:

extends: substitution

message: Use '%s' instead of '%s'

level: warning

ignorecase: false

# Maps tokens in form of bad: good

swap:

"contrib": "contributed"

"D6": "Drupal 6"

"D7": "Drupal 7"

"D8": "Drupal 8"

"D9": "Drupal 9"

"[Dd]rupalize.me": "Drupalize.Me"

"Drupal to Drupal migration": "Drupal-to-Drupal migration"

"drush": "Drush"

"github": "GitHub"

"in core": "in Drupal core"

"internet": "Internet"

"java[ -]?scripts?": JavaScript

...

The above configuration file provides a list of common typos and their correction. Because this is a YAML file it's relatively easy for anyone to edit and add additional substitutions. For these suggestions we've set the error level to warning. When we run Vale we can tell it to skip warnings and only report errors.

In another example we've got a style that enforces use of the Chicago Manual of Style for determining how to capitalize a tutorial's title.

extends: capitalization

message: "Tutorial title '%s' should be in title case"

level: error

scope: heading.h1

style: Chicago

# $title, $sentence, $lower, $upper, or a pattern.

match: $title

This is configured as an error.

Running it locally

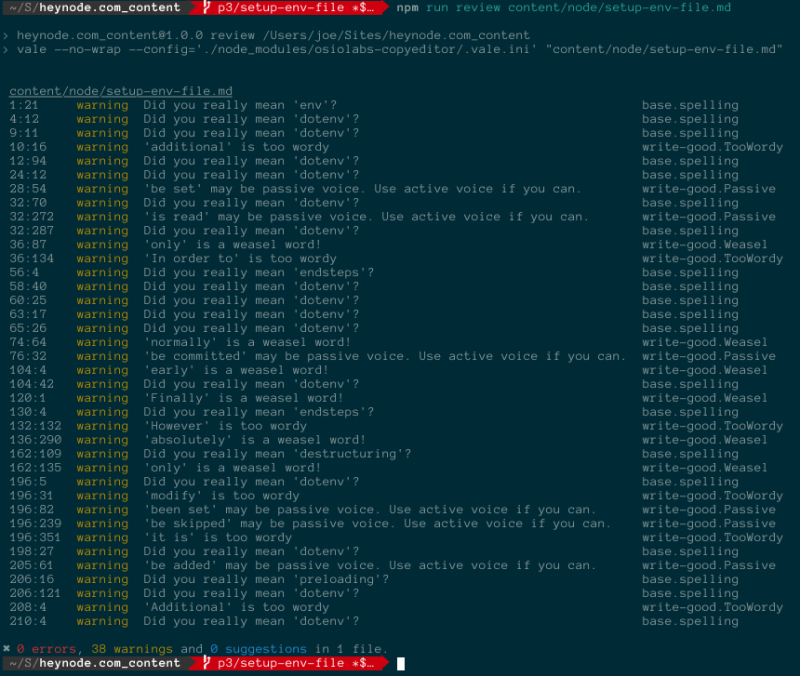

Everyone authoring, or reviewing, content can install Vale locally and run it with our specific styles. Doing so outputs a list of all the errors and warnings that Vale caught.

Example:

As a content author this is great because it can help me fix things before sending the content off for review. I don't have to worry about the disappointment of having someone send a tutorial back with endless nit-picks over my failure to remember every last detail of our style guide.

As a content reviewer I get a good list of places to start looking for possible improvements, as well as feel confident I can spend more time focusing on substantive review rather than looking for incorrect use of Javascript vs. JavaScript.

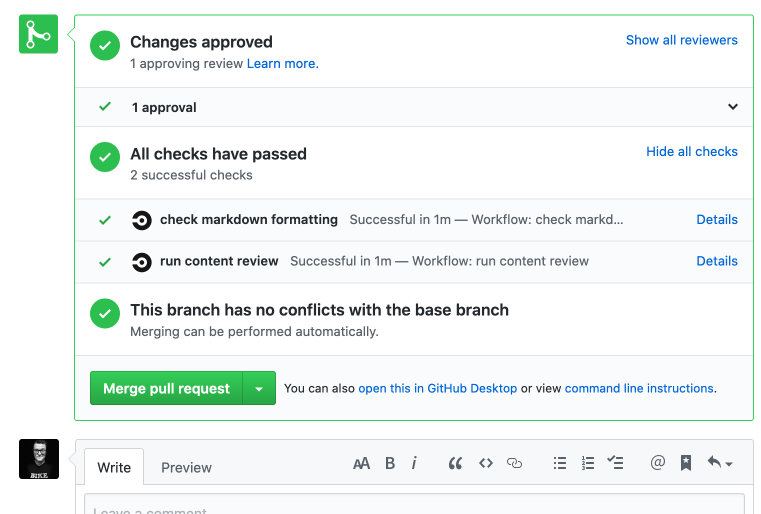

Automating it with Circle CI

Once we got an initial set of styles in place we were able to setup a CircleCI job that executes against each new Pull Request (the canonical version of all our content is stored in Git). The result is that at the bottom of every pull request you can see two checks: one for Vale rules, and one for Markdown formatting. If either detects an error it is revealed quickly and can be fixed.

When we run Vale in Circle CI we suppress all non-error suggestions. So it'll only mark a PR as failing if there's something objectively wrong. These are usually quick to fix.

Because we can switch a rule from warning to error by editing the configuration file we can trial new rules. We can also set up rules that are useful for us to have while reviewing but don't need to block a piece of content from being published.

Recap

In order to ensure that content reviewers can spend their time focused on the substance of a tutorial and not on enforcing the style guide, we use Vale to help automate the process of content review. It's helped us have more meaningful conversations about the content, and has also reduced the animosity that can occur as the result of feeling like someone is being hypercritical of your work.

If you work with a style guide I highly recommend you check out Vale as a tool to help enforce it.

Comments

I have been looking for this information for a long time, I was very surprised when I found it here.

This is a wonderful article, given so much info in it, These type of articles keeps the users interest in the website, and keep on sharing more ... good luck.

Add new comment